tP: Practical Exam (PE)

PE Overview

- PE is not entirely a pleasant experience, but is an essential component that aims to increase the quality of the tP work, and the rigor of tP grading.

The PE is divided into four phases:

- Phase 1: Bug Reporting: In this phase, you will test the allocated product and report bugs, similar to PE-D. Done during week 13 lecture slot, and further divided into parts I, II, and III.

- Phase 1 - part I Product Testing [60 minutes] -- to report bugs in the product (but can report documentation bugs too)

- Phase 1 - part II Evaluating Documents [30 minutes] -- to report bugs in the UG and DG only

- Phase 1 - part III Overall Evaluation [15 minutes] -- to give overall evaluation of the product, documentation, effort, etc.

- Phase 2: Developer Response: This phase is for you to respond to the bug reports you received. Done during Sat-Mon after PE

- Phase 3: Tester Response: In this phase you will receive the dev teams response to the bugs you reported, and will give your own counter response (if needed). Done during Tue-Thu after PE

- Phase 4: Tutor Moderation: In this phase tutors will look through all dev responses you objected to in the previous phase and decide on a final outcome. Students are not usually involved in this phase.

- Phase 1: Bug Reporting: In this phase, you will test the allocated product and report bugs, similar to PE-D. Done during week 13 lecture slot, and further divided into parts I, II, and III.

Grading:

- Your performance in the practical exam will affect your final grade and your peers', as explained in Admin: Project Grading section.

- As such, we have put in measures to identify and penalize insincere/random evaluations.

- Also see:

PE Preparation, Restrictions

- Mode: you can choose between remote or F2F (we'll use a Canvas survey to collect your preferred mode):

- Remote mode: This is the recommended mode. Proctored via Zoom. You'll need to join the Zoom session from a quiet place (i.e., conducive to an exam) at which you can set up a Zoom device for proctoring.

Choose this mode only if you are able to comply with the Zoom proctoring requirements given further down. - F2F mode: Attend the PE at the lecture venue (LT15).

Choose only if you are unable to use the remote mode (reason: higher the number of F2F attendees, higher the risk of Wi-Fi speed issues and GitHub throttling issues).

- Remote mode: This is the recommended mode. Proctored via Zoom. You'll need to join the Zoom session from a quiet place (i.e., conducive to an exam) at which you can set up a Zoom device for proctoring.

Those opting for the F2F mode can ignore any Zoom-related points in the instructions below.

When: Last lecture slot of the semester (Fri, Apr 19th). Remember to join 15-30 minutes earlier than usual lecture start time. The Zoom link will be given to you closer to the day.

PE Phase 1 will be conducted under exam conditions. For the remote mode, we will be following the SoC's E-Exam SOP, combined with the deviations/refinements given below. Any non-compliance will be dealt with similar to a non-compliance in the final exam.

- Remote mode proctoring will be done via Zoom. No admission if the following requirements are not met.

- You need two Zoom devices (PC: chat, audio

video, Phone: video,audio), unless you have an external webcam for your PC. - To change the Zoom display name as required by the PE, join the Zoom call, go to the 'Participation' panel, and search for yourself. Then, click on 'More', followed by 'Rename'.

- Add your

[PE_seat_number]in front of the first name of your Zoom display name, in your Zoom devices. Seat numbers can be found in here about 2 days before the PE. e.g.,[M18] John Doe(M18is the seat number)[M18][PC] John Doe(for the PC, if using a phone as well)

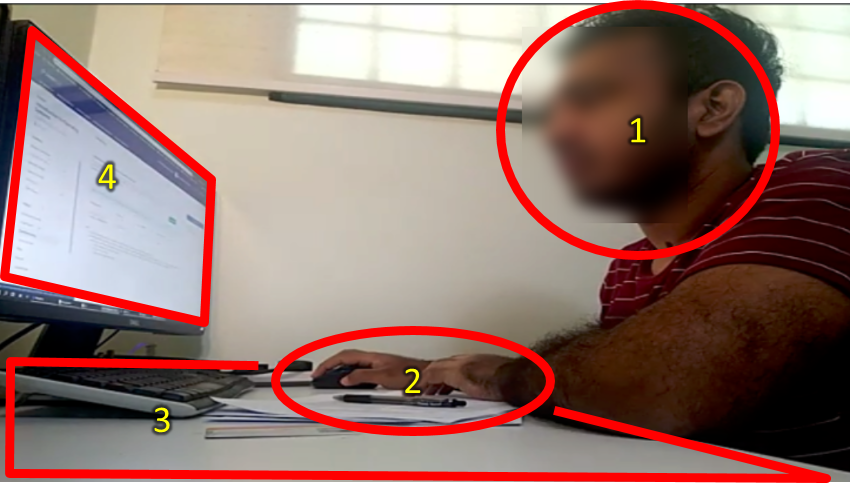

- Set your camera so that all the following are visible:

- your face (side view, no mask)

- your hands

- the work area (i.e., the table top)

- the computer screen

- You need two Zoom devices (PC: chat, audio

- Join the Zoom waiting room 15-30 minutes before the start time. Admitting you to the Zoom session can take some time.

If on F2F mode: Arrive at lecture venue (LT15) around 10-15 minutes early. - In case of Zoom outage, we'll fall back on MS Teams (MST). Make sure you have MST running and have joined the MST Team for the class.

- Recording the screen is not required.

- You are allowed to use head/ear phones. But no talking allowed (unless you are talking to the invigilator) -- so, no talking/singing to yourself as this can be mistaken for a rule violation.

- Only one screen is allowed (for both remote mode and F2F mode). If you want to use the secondary monitor, you should switch off the primary monitor. The screen being used should be fully visible in the Zoom camera view.

If using a second device for Zoom proctoring, the screen of that device should only be used for Zoom. - Do not use the public chat channel to ask questions from the prof. If you do, you might accidentally reveal which team you are testing.

- Do not use more than one CATcher instance at the same time. Our grading scripts will red-flag you if you use multiple CATcher instances in parallel.

- Use MS Teams (not Zoom) private messages to communicate with the prof. Zoom sessions are invigilated by tutors, not the prof.

- Do not view video Zoom feeds of others while the testing is ongoing. Keep the video view minimized.

- Bug reporting will be done using CATcher, similar to,

- During the bug reporting periods (i.e., PE Phase 1 - part I and PE Phase 1 - part II), do not use websites/software not in the list given below. If you need to visit a different website or use another software, please ask for permission first.

- Website: Canvas

- Website/software: MSTeams (only to communicate with the prof of Tech support)

- Website: Course website (e.g., to look up PE info)

- Software: CATcher, any text editor, any screen grab/recording software

- Software: PDF reader (to read the UG/DG or other references such as the textbook)

- Software: A text editor or word processing software (to keep notes while testing)

- Do not visit GitHub in PE Phase 1 - part I unless you are going there to download a file the team has provided and is needed for testing. You may visit GitHub during part II and part III.

- Do not use any other software running in the background e.g., Telegram chat.

- This is a manual testing session. Do not use any test automation tools or custom scripts.

- You may use any digital/physical notes during the PE e.g., a list of things to check.

- Recommended to read the guidelines the dev team will follow when responding to your bug reports later, given in the panel below. This will help decide what kind of bugs to report.

- Bugs reported during the PE should be the result of your own testing. Reporting bugs found by others as your own will be reported as a case of academic dishonesty (severity is similar to cheating during the final exam).

- [Within 24 hours prior to the PE] Get CATcher 'warmed-up' for the PE.

Strongly recommended to do the following about a day in advance, so that there is enough lead time to sort out any CATcher-related problems before the PE.- Login to CATcher,

while choosing the

CS2103/T PEas the session. - Allow CATcher to create a repo named

pe, when asked. - Create a dummy bug report. Edit it. Delete it.

Caution: Do not reuse these dummy bug reports (i.e., by editing them later) to submit real PE bugs. As they were created outside the PE duration, they will be ignored by PE bug processing scripts. - If you encounter any problems, post in the CATcher issue tracker.

- Login to CATcher,

while choosing the

PE Phase 1: Bug Reporting

In this phase, you will test the allocated product and report bugs, similar to PE-D. Done during week 13 lecture slot, and further divided into parts I, II, and III.PE Phase 1 - Part I Product Testing [60 minutes]

Bonus marks for high accuracy rates!

You will receive bonus marks if a high percentage (e.g., some bonus if >50%, a substantial bonus if >70%,) of your bugs are accepted as reported (i.e., the eventual type.* and severity.* of the bug match the values you chose initially and the bug is accepted by the team).

Take away: Aim for the correct severity/type etc. rather than the one that gives you most marks, as the former can end up earning you more marks in the end anyway.

Test the product and report bugs as described below. You may report both product bugs and documentation bugs during this period.

Testing instructions for PE and PE-D

a) Launching the JAR file

- Get the jar file to be tested:

- Download the latest JAR file from the team's releases page, if you haven't done this already.

- Download the zip file from the given location (to be given to you at least a few hours before the PE), if you haven't done that already.

- The file is zipped using a two-part password.

- We will email you the second part in advance, via email (it's unique to each student). Keep it safe, and have it ready at the start of the PE.

- At the start of the PE, we'll give you the first part of the password (common to the whole class). Use combined password to unzip the file, which should give you another zip file with the name suffix

_inner.zip. - Unzip that second zip file normally (no password required). That will give you a folder containing the JAR file to test and other PDF files needed for the PE. Warning: do not run the JAR file while it is still inside the zip file.

Ignore thepadding_filefound among the extracted files. Its only purpose is to mask the true size of the JAR file so that someone cannot guess which team they will be testing based on the zip file size. - Recommended: Try above steps using the this sample zip file if you wish (first part of the password:

password1-, second part:password2i.e., you should usepassword1-password2to unzip it).

Use the JAR file inside it to try the steps given below as well, to confirm your computer's Java environment is as expected and can run PE jar files.

- Put the JAR file in an empty folder in which the app is allowed to create files (i.e., do not use a write-protected folder).

In rare cases, the team could have submitted a ZIP file instead of a JAR file. In that case, unzip that file into the target folder. - Open a command window. Run the

java -versioncommand to ensure you are using Java 11.

Do this again even if you did this before, as your OS might have auto-updated the default Java version to a newer version. - Check the UG to see if there are extra things you need to do before launching the JAR file e.g., download another file from somewhere

You may visit the team's releases page on GitHub if they have provided some extra files you need to download. - Launch the jar file using the

java -jarcommand rather than double-clicking (reason: to ensure the jar file is using the same java version that you verified above). Use double-clicking as a last resort.

We strongly recommend surrounding the jar filename with double quotes, in case special characters in the filename causes thejava -jarcommand to break.

e.g.,java -jar "[CS2103-F18-1][Task Pro].jar"

Windows users: use the DOS prompt or the PowerShell (not the WSL terminal) to run the JAR file.

Linux users: If the JAR fails with an error labelledGdk-CRITICAL(happens in Wayland display servers), try running it usingGDK_BACKEND=x11 java -jar jar_file_name.jarcommand instead.

If the product doesn't work at all: If the product fails catastrophically e.g., cannot even launch, or even the basic commands crash the app, do the following:

- Check the UG of the team, to see if there are extra things you need to do before launching the JAR.

Confirm that you are using Java 11 and using thejava -jarcommand to run the JAR, as explained in points above. - Contact our head TA via MS Teams (name:

Kim Hyeongcheol, NUSNET:dcskh) and give him

(a) a screenshot of the error message, and

(b) your GitHub username. - Wait for him to give you a fallback team to test.

Contact the prof (via MS Teams) if you didn't get a response from Kim within 5 minutes. - Delete bug reports you submitted for the previous team (if any), using CATcher.

- You should not go back to testing the previous team after you've been given a fallback team to test.

b) What to test

- Test the product based on the User Guide (PDF version) available from their releases page on GitHub.

- Do system testing first i.e., does the product work as specified by the documentation?. If there is time left, you can do acceptance testing as well i.e., does the product solve the problem it claims to solve?.

Test based on the Developer Guide (Appendix named Instructions for Manual Testing) and the User Guide PDF files. The testing instructions in the Developer Guide can provide you some guidance but if you follow those instructions strictly, you are unlikely to find many bugs. You can deviate from the instructions to probe areas that are more likely to have bugs.

If the provided UG/DG PDF files have serious issues (e.g., some parts seem to be missing), ask prof for permission to use the Web versions of UG/DG instead.The DG appendix named Planned Enhancements (if it exists) gives some enhancements the team is planning for the near future. The feature flaws these enhancements address are 'known' -- reporting them will not earn you any credit.

However, you can reporttype.FeatureFlawsbugs if you think these enhancements themselves are flawed/inadequate.

You can also reporttype.DocumentationBugbugs if any of the enhancements in this list combines more than one enhancement.You may do both system testing and acceptance testing.

Be careful when copying commands from the UG (PDF version) to the app as some PDF viewers can affect the pasted text. If that happens, you might want to open the UG in a different PDF viewer.

If the command you copied spans multiple lines, check to ensure the line break did not mess up the copied command.

c) What bugs to report?

- You may report functionality bugs, UG bugs, and feature flaws.

- You can also post suggestions on how to improve the product.

Be diplomatic when reporting bugs or suggesting improvements. For example, instead of criticising the current behavior, simply suggest alternatives to consider.

- Report functionality bugs:

- Do not post suggestions but if the product is missing a critical functionality that makes the product less useful to the intended user, it can be reported as a bug of type

Type.FeatureFlaw. The dev team is allowed to reject bug reports framed as mere suggestions or/and lacking in a convincing justification as to why the omission or the current design of that functionality is problematic.

- You may also report documentation bugs (UG bugs in particular) but keep in mind that there is another time (i.e., part II) set aside for reporting documentation bugs too.

d) How to report bugs

- Post bugs as you find them (i.e., do not wait to post all bugs at the end) because bug reports created/updated after the allocated time will not count. Even minor updates (such as changing a label) outside the allowed time window will invalidate that bug.

- Launch CATcher, and login to the correct profile (when CATcher asks, consent to creating a new repo):

- PE Dry Run:

CS2103/T PE Dry run - PE:

CS2103/T PE

- PE Dry Run:

- Post bugs using CATcher.

- If you encounter a 'Failed to fetch' error during CATcher login, try again. If it still fails, try a different network e.g., your mobile phone's hotspot.

- If GitHub prompts you to 're-authorize' CATcher (this happens if GitHub detects a rush of login requests from the same account), just re-authorize as requested.

Issues created for PE-D and PE need to be in a precise format for our grading scripts to work. Incorrectly-formatted responses will have to discarded. Therefore, you are not allowed to use the GitHub interface for PE-D and PE activities, unless you have obtained our permission first.

If you 'warmed up' CATcher for the PE earlier, you should already have a repo named pe in your GitHub account, created by CATcher during that warming up. If that is not the case, create a repo to post your bug reports as given in the panel below:

- Post bug reports in the

perepo. - The whole description of the bug should be in the issue description i.e., do not add comments to the issue.

- Choose exactly one

type.*label and exactly one*.severitylabel.

e) Bug report format

- Each bug should be a separate issue i.e., do not report multiple problems in the same bug report.

If there are multiple bugs in the same report, the dev team will select only one of the bugs in the report and discard the others. - When reporting similar bugs, it is safer to report them as separate bugs because there is no penalty for reporting duplicates while putting multiple bugs in the same report can reduce your bug count (see the previous point). But as submitting multiple bug reports take extra time, if you are quite sure they will be considered as duplicates by the dev team later, you can report them together, to save time.

- Write good quality bug reports; poor quality or incorrect bug reports will not earn credit.

Remember to give enough details for the receiving team to reproduce the bug. If the receiving team cannot reproduce the bug, you will not be able to get credit for it.

- Assign exactly one

severity.*label to the bug report. Bug reports without a severity label are consideredseverity.Low(lower severity bugs earn lower credit)

Bug Severity labels:

severity.VeryLow: A flaw that is purely cosmetic and does not affect usage e.g., a typo/spacing/layout/color/font issues in the docs or the UI that doesn't affect usage. Only cosmetic problems should have this label.severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users, but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., only problems that make the product almost unusable for most users should have this label.

When applying for documentation bugs, replace user with reader.

- Assign exactly one

type.*label to the issue.

Type labels:

type.FunctionalityBug: A functionality does not work as specified/expected.type.FeatureFlaw: Some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance-testing bug that falls within the scope of v1.4 features.

These issues are counted against the product design aspect of the project. Therefore, other design problems (e.g., low testability, mismatches to the target user/problem, project constraint violations etc.) can be put in this category as well.

Features that work as specified by the UG but should have been designed to work differently (from the end-user's point of view) fall in this category too.type.DocumentationBug: A flaw in the documentation e.g., a missing step, a wrong instruction, typos

- If you need to include

<or>symbols in your bug report, you can either use\to escape them (i.e., use\<and\>e.g.,x \< yinstead ofx < y) or wrap it inside back-ticks.

Reason: CATcher and GitHub strips out content wrapped in<and>, for security reasons.

When in doubt, choose the lower severity: If the severity of a bug seems to be smack in the middle of two severity levels, choose the lower severity (unless much closer to the higher one than the lower one).

- Reason: The teaching team follow the same policy when adjudicating disputed severity levels in the last phase of the PE.

- As the tester, you might feel like you are throwing away marks by choosing a lower priority; but the lower

priority has a lower risk of being disputed by the dev team, giving you (and the dev team)

a better chance of earning bonus marks for accuracy (which will be substantial).

To make your case stronger, state in the bug report why you think the bug might even qualify for a higher severity, while you actually chose the lower one. - In this section, there will be a mass identity check

Mass identity check

When the invigilator announces an identity check,

- remove mask (if any)

- turn towards the camera

- move closer to the camera (but do not adjust the camera position) -- no need to show the student card

- hold that pose until the invigilator has taken a screenshot and asks you to go back to the PE activity.

Please comply quickly as non-compliance can delay the PE for everyone. This is expected to take no more than 15 seconds.

PE Phase 1 - Part II Evaluating Documents [30 minutes]

- This slot is for reporting documentation bugs only. You may report bugs related to the UG and the DG.

Only the content of the UG/DG PDF files (not the online version) should be considered. - For each bug reported, cite evidence and justify. For example, if you think the explanation of a feature is too brief, explain what information is missing and why the omission hinders the reader.

Do not report bugs that are not contained within in the UG and DG pdf files (e.g., bugs in theREADME.md).

You may visit the team's project on GitHub during this portion, for the purpose of verifying the accuracy of documentation e.g., to check if a diagram matches the code. You are also allowed to download and open the team's code in a code editor.

You may report grammar issues as bugs but note that minor grammar issues that don't hinder the reader are allowed to be categorized as

response.NotInScope(by the receiving team) -- such bugs earn only small amount or credit for the tester (hence, do not waste time reporting too many minor grammar errors).

PE Phase 1 - Part III Overall Evaluation [15 minutes]

- To be submitted via TEAMMATES. You are expected to complete this during the PE session itself, but you have until the end of the day to submit (or revise) your submissions.

If you have to valid reason to leave the PE early (e.g., having another exam right after the PE), you may leave after part II has ended and do part III later -- but note that if you fail to submit this by the hard deadline (i.e., end of the day), you will receive an automatic penalty. - The TEAMMATES email containing the submission link should have reached you the day before the PE. If you didn't receive it by then, you can request it to be resent from this page.

- If TEAMMATES submission page is slow/fails to load (all of you accessing it at the same time is likely to overload the server), wait 3-5 minutes and try again. Do not refresh the page rapidly as that will overload the server even more, and recovery can take even longer.

PE Phase 2: Developer Response

This phase is for you to respond to the bug reports you received. Done during Sat-Mon after PEDeadline: Mon, Apr 22nd 2359

Yes, that can be better! For each bug report you receive, if you think a software engineer who takes pride in their own work would say "yes, that can be better!", accept it graciously, even if you can come up with some argument to justify the current behavior.

Even when you still want to defend the current behavior, instead of pretending that the behavior was a deliberate choice to begin with, you can say something like,

"Thanks for raising this. Indeed, it didn't occur to us. But now that we have thought about it, we still feel .."

Some bugs are 'expected'. Given the short time you had for the tP and your inexperience in SE team projects, this work is not expected to be totally bug free. The grading scheme factors that in already -- i.e., your grade will not suffer if you accept a few bugs in this phase.

Bonus marks for high accuracy rates!

You will receive bonus marks if a high percentage (e.g., some bonus if >60% substantial bonus if >80%) of bugs are accepted as triaged (i.e., the eventual type.*, severity.*, and response.* of the bug match the ones you chose).

It's not bargaining!

When the tester and the dev team cannot reach a consensus, the teaching team will select either the dev team position or the tester position as the final state of the bug, whichever appear to be closer to being reasonable. The teaching team will not come up with our own position, or choose a middle ground.

Hence, do not be tempted to argue for an unreasonable position in the hope that you'll receive something less than asked but still in your favor e.g., if the tester chose severity.High but you think it should be severity.Medium, don't argue for severity.VeryLow in the hope that the teaching team will decide a middle ground of severity.Low or severity.Medium. It's more likely that the teaching team will choose the tester's position as yours seems unreasonable.

More importantly, this is not a bargaining between two parties; it's an attempt to determine the true nature of the bug, and your ability to do so (which is an important skill).

Favor response.NotInScope over response.Reject

If there is even the slightest chance that the change directly suggested (or indirectly hinted at) by a bug report is an improvement that you might consider doing in a future version of the product, choose response.NotInScope.

Choose response.Reject only for bug reports that are clearly incorrect (e.g., the tester misunderstood something).

Accordingly, it is typical a team to have a lot more response.NotInScope bugs and very few response.Reject bugs.

Note that response.NotInScope bugs earn a small amount of credit for the tester without any penalty for the dev team, unless there is an unusually high number of such bugs for a team.

Bug reviewing is recommended to be done as a team as some of the decisions need team consensus.

Instructions for Reviewing Bug Reports

- Don't freak out if there are a lot of bug reports. Many can be duplicates and some can be false positives. In any case, we anticipate that all of these products will have some bugs and our penalty for bugs is not harsh. Furthermore, it depends on the severity of the bug. Some bug may not even be penalized.

- Nit-picking is a good sign: If you receive a lot of nit-picking type of bugs that make you roll your eyes, it means testers were unable to find more serious bugs. That's a good thing.

- Not exactly zero-sum: As mentioned earlier, the penalty for having a specific bug is not the same as the reward for reporting that bug (it's not a zero-sum game). For example, the reward for testers will be higher (because we don't expect the products to have that many bugs after they have gone through so much prior testing)

Penalty for a minor bug (e.g., ) is unlikely to make a difference in your final grade, especially given that the penalty applies only if you have more than a certain amount of bugs.

For example, in a typical case a developer might be assigned 5+ severity.VeryLow bugs before the penalty even starts affecting their marks.

Accordingly, we hope you'll accept bug reports graciously (rather than fight tooth-and-nail to reject every bug report received) if you think the bug is within the ballpark of 'reasonable'. Those minor bugs are really not worth stressing/fighting over.

- CATcher does not come with a UG, but the UI is fairly intuitive (there are tool tips too). Do post in the forum if you need any guidance with its usage.

- Tip: If you think others might be editing the same issues at the same time, use the

Syncbutton at the top to force-sync your view with the latest data from GitHub.

- Go to CATcher Web app, and login to the profile

CS2103/T PE. It will show all the bugs assigned to your team, divided into three sections:Issues Pending Responses- Issues that your team has not processed yet.Issues Responded- Your job is to get all issues to this category.Faulty Issues- e.g., Bugs marked as duplicates of each other, or causing circular duplicate relationships. Fix the problem given so that no issues remain in this category.

- Respond to the bug reports shown.

You must use CATcher. You are strictly prohibited from editing PE bug reports using the GitHub Web interface as it can render bug reports unprocessable by CATcher, sometimes in an irreversible ways, and can affect the entire class. Please contact the prof if you are unable to use CATcher for some reason.

- If a bug seems to be for a different product (i.e. wrongly assigned to your team), let us know ASAP.

- If the bug is reported multiple times,

- Mark all copies EXCEPT one as duplicates of the one left out (let's call that one the original) using the

A Duplicate oftick box. - For each group of duplicates, all duplicates should point to one original i.e., no multiple levels of duplicates, and no cyclical duplication relationships.

- If the duplication status is eventually accepted, all duplicates will be assumed to have inherited the

type.*andseverity.*from the original.

- Mark all copies EXCEPT one as duplicates of the one left out (let's call that one the original) using the

- If you cannot reproduce the bug based on the info given by the tester you are still expected to make a reasonable attempt to go beyond the information provided by the tester to reproduce the bug, if there is clear evidence of something wrong.

For example, the screenshot in the bug report clearly shows an error message that should not appear, but you can't reproduce the error message based on the info given by the tester. Perhaps the error was caused by something else the tester did although the tester didn't realize it is connected to the error. In this case, based on the error message, you might be in a better position to figure out the real cause of the error. If you don't, the decision can go against you in a later phase if either the tester or the moderator figures out how to reproduce the error and the moderator decides that it is something you should have been able to figure out yourself.

- Apply one of these labels (if missing, we assign:

response.Accepted)

Response Labels:

response.Accepted: You accept it as a valid bug.response.NotInScope: It is a valid issue, but fixing it is less important than the work done in the current version of the product e.g., it was not related to features delivered in v1.4 or lower priority than the work already done in v1.4.response.Rejected: What tester treated as a bug is in fact the expected and correct behavior (from the user's point of view), or the tester was mistaken in some other way. Note: Disagreement with the bug severity/type given by the tester is not a valid reason to reject the bug.response.CannotReproduce: You are unable to reproduce the behavior reported in the bug after multiple tries.response.IssueUnclear: The issue description is not clear. Don't post comments asking the tester to give more info. The tester will not be able to see those comments because the bug reports are anonymous.

Only the response.Accepted bugs are counted against the dev team. While response.NotInScope are not counted against the dev team, they can earn a small amount of consolation marks for the tester. The other three do not affect marks of either the dev team or the tester, except when calculating bonus marks for accuracy.

- If you disagree with the original bug type assigned to the bug, you may change it to the correct type.

Type labels:

type.FunctionalityBug: A functionality does not work as specified/expected.type.FeatureFlaw: Some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance-testing bug that falls within the scope of v1.4 features.

These issues are counted against the product design aspect of the project. Therefore, other design problems (e.g., low testability, mismatches to the target user/problem, project constraint violations etc.) can be put in this category as well.

Features that work as specified by the UG but should have been designed to work differently (from the end-user's point of view) fall in this category too.type.DocumentationBug: A flaw in the documentation e.g., a missing step, a wrong instruction, typos

If a bug fits multiple types equally well, the team is free to choose the one they think the best match, but keep the type chosen by the tester if it is one of the types that fits the bug equally well.

- If you disagree with the original severity assigned to the bug, you may change it to the correct level.

Bug Severity labels:

severity.VeryLow: A flaw that is purely cosmetic and does not affect usage e.g., a typo/spacing/layout/color/font issues in the docs or the UI that doesn't affect usage. Only cosmetic problems should have this label.severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users, but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., only problems that make the product almost unusable for most users should have this label.

When applying for documentation bugs, replace user with reader.

- If you need the teaching team's inputs when deciding on a bug (e.g., if you are not sure if the UML notation is correct), post in the forum. Remember to quote the issue number shown in CATcher (it appears at the end of the issue title).

Keep in mind that the bug triaging accuracy affects your marks, and therefore, the teaching team prefers not to dictate a specific response, type, or severity for a particular bug report (i.e., that decision should be yours). Nevertheless, we can provide some general comments relevant to the issue at hand. Additionally, we encourage other students to chime in with their opinions, as such discussions have learning value.

- Decide who should take responsibility for the bug. Use the

Assigneesfield to assign the issue to that person(s). There is no need to actually fix the bug though. It's simply an indication/acceptance of responsibility. If there is no assignee, we will distribute the penalty for that bug (if any) equally among all team members e.g., if the penalty is -0.4 and there are 4 members, each member will be penalized -0.1.- If it is not easy to decide the assignee(s), we recommend (but not enforce) that the feature owner should be assigned bugs related to the feature, Reason: The feature owner should have defended the feature against bugs using automated tests and defensive coding techniques.

- It is also fine to not assign a bug to anyone, in which case the penalty will be divided equally among team members.

As far as possible, choose the correct

type.*,severity.*,response.*, assignees, and duplicate status even for bugs you are not accepting. Reason: your non-acceptance may be rejected in a later phase, in which case we need to grade it as an accepted bug.

If a bug's 'duplicate' status was rejected later (i.e., the tester says it is not really a duplicate and the teaching team agrees with the tester), it will inherit the response/type/severity/assignees from the 'original' bug that it was claimed to be a duplicate of.Justify your response. For all the following cases, you must add a comment justifying your stance. Testers will get to respond to all those cases and will be considered by the teaching team in later phases (when resolving disputed bug reports).

If you don't provide a justification and the tester disagrees with your decision, the teaching team will have no choice but to rule in favor of the tester.- downgrading severity

- non-acceptance of a bug

- changing the bug type

- non-obvious duplicate

- You can also refer to the below guidelines:

PE Phase 3: Tester Response

In this phase you will receive the dev teams response to the bugs you reported, and will give your own counter response (if needed). Done during Tue-Thu after PEStart: Within 1 day after Phase 2 ends.

While you are waiting for Phase 3 to start, comments will be added to the bug reports in your /pe repo, to indicate the response each received from the receiving team. Please do not edit any of those comments or reply to them via the GitHub interface. Doing so can invalidate them, in which case the grading script will assume that you agree with the dev team's response. Instead, wait till the start of the Phase 3 is announced, after which you should use CATcher to respond.

Deadline: Thu, Apr 25th 2359

- In this phase you will get to state whether you agree or disagree with the dev team's response to the bugs you reported. If a bug reported has been subjected to any of the below by the dev team, you can record your objections and the reason for the objection.

- not accepted

- severity downgraded

- bug type changed

- bug flagged as duplicate (Note that you still get credit for bugs flagged as duplicates, unless you reported both bugs yourself. Nevertheless, it is in your interest to object to incorrect duplicate flags because when a bug is reported by more testers, it will be considered an 'obvious' bug and will earn slightly less credit than otherwise)

Don't feel upset if the dev team did not totally agree with most of the bugs you reported. That is to be expected, given you had very short time to make those bug decisions while the dev team had a lot more time to deliberate about them. Some may have given unreasonable (in your opinion) arguments against your bug reports; not to worry, just give your counter-arguments and leave it to the teaching team to decide (in the next phase) which position is more reasonable.

However, if the dev team's argument is not too far from 'reasonable', it may be better to agree than disagree.

Reason: an incorrect counterargument at this phase will lower your accuracy more than an incorrect decision made during the testing phase (because you now have more time to think about the bug) i.e., changing your position after you had more time to think of it and after having seen more information is encouraged, compared to sticking to your initial position 'no matter what'.

- If you would like to revise your own initial type/severity in response to the team's inputs, you can state that in your explanation e.g., you rated the bug

severity.Highand the team changed it toseverity.Lowbut now you think it should beseverity.Medium(do not change the original labels yourself though). - You can also refer to the below guidelines, mentioned during the previous phase as well:

- If the dev team disagreed with an aspect (i.e., type/severity/) and you now agree with the dev team's position, it will not hurt your accuracy rating. Here are some examples (for the

severity.*):

| Tester choice | Dev choice | Tester reaction | Teacher decision | Dev accuracy | Tester accuracy |

|---|---|---|---|---|---|

High | agreed | ||||

High | Low | agreed | no effect | ||

High | Low | disagreed | High | ||

High | Low | disagreed | Low |

- If you do not respond to a dev response, we'll assume that you agree with it.

- Procedure:

- When the phase has been announced as open, login to CATcher as usual (profile:

CS2103/T PE). - For each issue listed in the

Issues Pending Responsessection:- Click on it to go to the details, and read the dev team's response.

- If you disagree with any of the items listed, tick on the

I disagreetick box and enter your justification for the disagreement, and clickSave. - If you are fine with the team's changes, click

Savewithout any other changes upon which the issue will move to theIssue Respondedsection.

- No action is required for the bugs the team accepted exactly as you reported them (i.e., no change to type or severity). They are shown in CATcher for your reference only.

You must use CATcher. You are strictly prohibited from editing PE bug reports using the GitHub Web interface as it can render bug reports unprocessable by CATcher, sometimes in an irreversible ways, and can affect the entire class. Please contact the prof if you are unable to use CATcher for some reason.